In the past decade, blockchain technology has emerged as a revolutionary solution for building decentralized and secure systems. Beyond its association with cryptocurrencies, blockchain offers a vast field of opportunities for developers interested in creating innovative applications.

Blockchain development involves creating and maintaining decentralized applications (dApps) and smart contracts that run on platforms like Ethereum, Hyperledger, and Binance Smart Chain. To work in this sector, it’s essential to master:

- Programming languages: Solidity is essential for Ethereum, while Go, JavaScript, and Python are useful for other platforms. With the recent rise of the Solana ecosystem, Rust has become a highly sought-after language.

- Cryptography: Understanding techniques such as asymmetric encryption, hash functions, and digital signatures is crucial to ensure application security.

- Data structures and algorithms: Familiarity with structures like Merkle trees and consensus algorithms such as Proof of Work (PoW) and Proof of Stake (PoS) is necessary for designing efficient blockchain systems.

Additionally, using specialized tools enhances the development process:

- Development environments: Frameworks like Truffle and Hardhat streamline the compilation, testing, and deployment of smart contracts.

- APIs and libraries: Web3.js and ethers.js enable interaction between web applications and the blockchain.

- Version control systems: Git is indispensable for efficient collaboration and source code management.

Adopting best practices is more important than ever for success in blockchain projects:

- Writing secure code: It’s vital to prevent vulnerabilities such as reentrancy attacks and overflows.

- Thorough documentation: Keeping detailed records of the code and its functionality facilitates audits and future collaboration.

- Rigorous testing: Implementing unit and integration tests ensures the correct functioning of smart contracts.

Blockchain development represents an exciting frontier in technology, offering opportunities to create innovative solutions and transform industries. Mastering technical skills, using the right tools, and adhering to best practices will position developers to lead in this dynamic field. As blockchain adoption continues to grow, those prepared to face its challenges will be in a privileged position to drive the digital future.

-P. Riera

Artificial Intelligence has transformed many industries, and software development is no exception. GitHub Copilot, powered by GPT-4 AI, is a tool that assists developers in many programming languages, making it easier to write code, boosting productivity, and reducing errors.

GitHub Copilot has become an essential tool for development. It quickly and effectively provides real-time code suggestions. This article explores its features, limitations, and the impact it’s having on programming.

What is GitHub Copilot?

GitHub Copilot is an AI tool developed by GitHub in collaboration with OpenAI. It is based on the OpenAI Codex model, which has been trained on publicly available source code datasets from GitHub repositories. Designed to interact directly with code as it is written, Copilot provides suggestions for code snippets, full functions, and even complex algorithms—tailored to the context of the project.

Key Features

1. Smart Autocomplete

Copilot suggests complete code snippets as you type, significantly reducing development time.

Example in C#:

// Developer comment: Method to fetch products from a database

public async Task> GetProductsAsync()

{

using var context = new AppDbContext();

return await context.Products.ToListAsync();

}

Copilot will analyze the intent and generate the appropriate code.

2. Code Generation from Descriptions

You can write comments in natural language, and Copilot will automatically generate the corresponding code—saving developers from having to write repetitive code.

Example:

// Generate a method that calculates the sum of two numbers

Copilot will generate:

public int Sum(int a, int b)

{

return a + b;

}

3. Refactoring and Optimization

It suggests improvements, analyzes your code, and provides refactorings and optimizations to make it more efficient and readable.

4. Support for Different Technologies

Besides .NET and C#, Copilot supports many programming languages, including Python, JavaScript, TypeScript, Ruby, Go, and more.

5. Contextual Learning & Repetitive Tasks

As you use Copilot, it adapts to your coding style, analyzes your existing code, and improves its suggestions. It also offers alternatives if it detects code that could be improved.

6. Code Explanation

Copilot can explain code line by line, making it easier to understand what it does and helping you learn the language.

Example:

/explain @”^[A-Za-z]{1,3}\d{1,4}$”

Copilot will generate:

The code snippet @”^[A-Za-z]{1,3}\d{1,4}$” is a regular expression (regex) pattern used to validate a specific string format, in this case, a license plate number. Let’s break it down:

^: Asserts position at the start of the string.

[A-Za-z]{1,3}: Matches 1 to 3 alphabetic characters. Square brackets [] define a character class including uppercase (A–Z) and lowercase (a–z) letters. The quantifier {1,3} means this part must occur at least once and up to three times.

\d{1,4}: After the letters, matches 1 to 4 digits. \d is a shorthand for any digit (same as [0–9]). {1,4} indicates it must occur between one and four times.

$: Asserts position at the end of the string. In short, this regex validates strings that start with 1 to 3 letters followed by 1 to 4 digits, with nothing else before or after—ideal for validating alphanumeric license plate formats.

7. Project Structuring

Copilot can help organize your code—create folders, organize classes and interfaces, and work with a well-structured project layout.

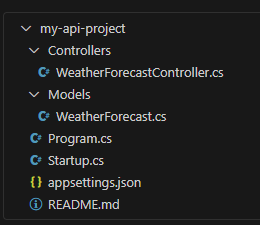

Example:

/new api

Copilot will generate:

8. Bug Detection and Fixing

One of Copilot’s most useful features is its ability to detect bugs and suggest more efficient and optimized fixes.

Example:

/fix var sortedValues = values.OrderByDescending((value, index) => value * weights[index]);

Copilot will generate:

var sortedValues = values

.Select((value, index) => new { Value = value, Weight = weights[index] })

.OrderByDescending(pair => pair.Value * pair.Weight)

.Select(pair => pair.Value)

.Take(2)

.ToArray();

9. Security and Testing

Even if your code is secure, Copilot can suggest security improvements. It can also generate unit and integration tests—saving time and effort.

Example:

/test Sum

Impact on Programming

GitHub Copilot is changing how programming is done in several ways:

- Improved productivity: By automating repetitive tasks and offering precise code suggestions, Copilot significantly increases developer productivity.

- Accelerated learning curve: Beginners benefit from Copilot’s suggestions to understand syntax, code patterns, and improve their knowledge of languages and algorithms.

- Error reduction: Copilot helps developers avoid common mistakes and ensures code consistency.

- Encouraging experimentation: With Copilot handling boilerplate code, developers can focus more on experimenting and exploring new ideas.

Ethical Considerations and Limitations

While GitHub Copilot offers many benefits, it’s essential to keep its limitations and ethical implications in mind:

- Code quality: Copilot’s suggestions aren’t always perfect—it might provide solutions that don’t fit your intended results. Developers should always review and test generated code.

- Copyright concerns: Since Copilot is trained on publicly available code, there are concerns about ownership. A paid enterprise version exists where Copilot doesn’t share or store code externally, helping maintain security and privacy.

- Over-reliance: Developers should avoid becoming too dependent on Copilot, as it may hinder the development of their own programming skills.

Conclusion

GitHub Copilot represents a significant advancement in AI-assisted programming. Its ability to generate contextually relevant code suggestions has the potential to transform how developers write software. However, it’s crucial to use Copilot responsibly and recognize its limitations. After all, AI lacks the intuition and experience that human developers bring to the table. Therefore, developers remain essential—and AI won’t be replacing us anytime soon.

💡 If you haven’t tried it yet, now is the perfect time to integrate GitHub Copilot into your workflow and boost your productivity! 🚀

The taboo of using Artificial Intelligence in the workplace: Demystifying myths and enhancing benefits

In the digital age, the integration of artificial intelligence (AI) in the workplace has emerged as one of the most transformative factors. Despite the undeniable benefits these tools offer, a lingering taboo still generates concern and resistance in many corporate environments. This phenomenon is largely driven by fears of automation, job displacement, and distrust in algorithm-based decision-making. However, a deeper analysis reveals that, when implemented responsibly, AI not only complements human talent but also enhances efficiency and innovation.

Origins and Causes of the Taboo

Skepticism toward AI in the workplace stems from several factors:

• Fear of Automation: The perception that machines will replace human roles generates anxiety and resistance among workers, particularly in repetitive or administrative jobs.

• Distrust in Automated Decision-Making: The idea of delegating critical decisions to algorithms—whose internal processes sometimes appear opaque—creates uncertainty and concerns about fairness and justice in business decisions.

• Media and Cultural Impact: The portrayal of AI in popular culture and certain media narratives reinforces myths about artificial “intelligence,” fueling fears that often exceed the reality of its application.

Key Benefits of AI Integration in the Workplace

Despite concerns, the benefits AI brings to organizations are numerous and significant:

• Increased Productivity: Automating routine tasks frees up employees to focus on strategic and creative activities, improving operational efficiency.

• Optimized Decision-Making: AI-driven systems can analyze vast amounts of data in real-time, providing valuable insights that enable more informed and accurate decisions.

• Enhanced Customer Service: Tools such as chatbots and recommendation systems personalize the user experience, optimizing interactions and increasing customer satisfaction.

• Cost Reduction and Efficient Resource Use: AI’s ability to identify inefficiencies in processes and optimize resource utilization leads to significant cost savings and better asset management.

• Fostering Innovation: AI creates new opportunities for product and service innovation, driving competitiveness and adaptability in an ever-changing market.

Integration and Adaptation into Organizational Culture

To overcome the taboo and maximize AI’s advantages, organizations must proactively manage change:

• Training and Education: Investing in training programs that familiarize employees with AI tools and their practical applications helps dispel fears and promote a culture of continuous learning.

• Communication and Transparency: Establishing clear communication channels to explain how AI systems function, what decisions they automate, and how they are monitored builds trust and reduces perceptions of opacity.

• Gradual Implementation and Pilot Testing: Gradually adopting AI through pilot projects allows organizations to assess its impact, adjust processes, and tangibly demonstrate benefits, facilitating internal acceptance.

Success Stories and Practical Examples

Various companies have already demonstrated that the responsible use of AI can positively transform the workplace:

• Financial Sector: Banks and financial institutions use AI algorithms for early fraud detection, risk analysis, and personalized services, achieving greater efficiency and security in their operations.

• Customer Service: Telecommunications and e-commerce companies have implemented chatbots that enhance user experience, reduce wait times, and enable 24/7 support without compromising quality.

• Process Optimization: In manufacturing and logistics industries, AI helps predict maintenance needs, manage inventories, and optimize distribution routes, leading to significant cost savings and reduced operational errors.

These examples show that, when carefully implemented, AI does not replace workers but instead enhances their capabilities, allowing them to focus on higher-value tasks.

Ethical Considerations and Implementation Challenges

The adoption of AI in the workplace also requires addressing critical ethical and security issues:

• Avoiding Bias and Ensuring Fairness: Designing algorithms that are fair and free from biases is essential to ensure that automated decisions do not perpetuate existing inequalities.

• Data Protection and Privacy: Responsible data management is crucial to safeguarding employee and customer privacy while complying with international regulations and standards.

• Accountability and Oversight: Establishing audit and control mechanisms to monitor AI systems ensures that they operate in alignment with organizational values and objectives.

Addressing these challenges ethically not only increases trust among internal and external stakeholders but also strengthens a company’s market reputation.

Conclusion

Although the use of artificial intelligence in the workplace has historically been surrounded by taboos and fears, its benefits are undeniable. By automating repetitive tasks, optimizing processes, and improving decision-making, AI serves as a strategic ally for enhancing productivity and innovation. To achieve successful integration, companies must invest in training, foster transparency, and establish robust ethical oversight mechanisms. Ultimately, by breaking the stigma and adopting a responsible approach, businesses can transform their workplaces, drive growth, and secure a competitive edge in an increasingly digital and dynamic market.

Iván L.

Front-end development is constantly evolving, with new technologies and approaches redefining how we build web applications each year. As we approach 2025, it is crucial to understand the trends shaping the future of the industry and prepare for the changes ahead. Below, we explore the key trends that will influence front-end development in the coming months.

1. Server Components in React

React remains one of the most popular frameworks, and with the introduction of Server Components, the rendering model will undergo a significant transformation. This feature allows more UI logic to run on the server, reducing the client-side load and improving overall performance. In e-commerce applications, for instance, products can be rendered on the server and sent pre-processed to the client, decreasing load times and enhancing user experience.

2. More Efficient Frameworks: Qwik, SolidJS, and Svelte

While React and Vue continue to dominate the ecosystem, new frameworks like Qwik, SolidJS, and Svelte are gaining popularity due to their focus on efficiency and performance optimization. These frameworks offer faster load times and a better user experience by minimizing JavaScript usage on the client side. Qwik, for example, implements an extreme “lazy loading” system, only loading the necessary code exactly when needed, preventing unnecessary downloads and improving interaction speed in feature-rich applications.

3. Artificial Intelligence in Development

AI is already transforming web development with tools like GitHub Copilot, ChatGPT, and Codeium. By 2025, these tools will become even more sophisticated, helping developers write code faster, detect errors, and optimize applications more efficiently. For example, GitHub Copilot can autocomplete entire functions based on comments or code snippets, allowing developers to reduce time spent on repetitive tasks and enhance productivity in large-scale projects.

4. CSS Evolution: Container Queries and New Features

CSS continues to evolve with the introduction of Container Queries, enabling more flexible designs without relying on media queries. This means components can adapt based on their container size rather than the full screen size, making layouts more modular and reusable. Additionally, new features like :has(), @scope, and color-mix() will empower designers and developers, allowing for more dynamic and efficient styling without extra JavaScript.

5. Micro-Frontends in More Projects

Micro-frontends architecture allows large applications to be divided into smaller, independent parts, improving scalability and maintenance. Platforms like Netflix, for example, can separate different sections of their application into autonomous modules—such as recommendations management, content library, and video player—allowing updates without affecting other parts of the application. This modular approach facilitates team collaboration and enhances development efficiency in large-scale projects.

Front-end development in 2025 will be defined by efficiency, performance optimization, and the integration of new technologies like Micro-Frontends and Artificial Intelligence. Staying updated will be key for developers to leverage these trends and create innovative web experiences. Are you ready for the future of web development?

Jules P.

In an era where climate change is one of the main challenges for our future, it is crucial for companies to apply favorable environmental practices. Customers look for eco-friendly businesses and employees want to work for ethical companies.

At the same time as companies embark on their sustainable journeys, data analytics is emerging as a transformative force in business. Both concepts may seem to have nothing in common, but there has been an increasing number of organizations applying big data to improve their sustainability. Data analytics allows companies to measure their environmental impact, optimize resources and drive impactful environmental changes.

These past months I have been working for a pioneer company in adopting sustainable IT practices to measure and reduce its impact on the environment. Since 2021, it has been voted every year the world’s most sustainable coffee company.

Thus, I would like to take this opportunity to talk about how Big Data and analytic tools are used to improve sustainability. This article explores diverse ways in which data analytics empowers companies to become more sustainable.

Energy efficiency and resource management

One of the primary applications of data analytics in sustainability is improving energy efficiency. These tools allow organizations to collect and process multiple data related to resource consumption and carbon footprint to identify which elements of the company are responsible for the most emissions. This way they can implement actions to reduce energy consumption, carbon footprint and develop predictive environmental models.

For example, Google uses data analytics to manage its data centers’ energy consumption. They use machine learning algorithms to analyze vast amounts of operational data from its data centers. These models process data on temperatures, power usage, cooling efficiency and workload distribution, which allow them to apply the most energy-efficient configurations. With this approach Google has reduced energy usage by predicting the optimal cooling configurations, resulting in an important reduction in energy needed for cooling.

Supply chain optimization

Supply chain is a significant area where data analytics can drive sustainability. Through data analysis, companies can track the efficiency of the different steps in their supply chain. They can optimize inventory management by providing real-time insights into demand patterns, inventory levels and lead times. This data also allows them to optimize transportation routes and identify the best paths of transportation, resulting in critical reductions in emissions, fuel consumption and transportation costs.

Walmart employs data analytics to optimize its supply chain reducing greenhouse gas emissions. They use advanced algorithms and GPS devices to gather data related to delivery schedules, traffic patterns and fuel consumption. In addition, their real-time inventory management system allows them to track inventory levels, sales patterns and demand forecast. By analyzing all this data Walmart has been able to improve fuel efficiency, reduce overproduction and excess inventory, and decrease its carbon footprint significantly.

Sustainable product design

The design of environmentally friendly products is one of the areas in which most industries are focusing their efforts. Data analytics plays a key role in evaluating the environmental impact on products throughout their entire life cycle. By evaluating data on materials, energy consumption and waste generation, organizations can take actions related to product design and development. The use of these tools helps businesses to create more sustainable products, use biodegradable materials and reduce excess packaging.

Nike uses data analytics to design sustainable products, such as Flyknit line of shoes. First, advanced analytics helped them to gather and analyze biomedical data from athletes, including foot movements, pressure points and performance metrics. Then they used data analytics tools to analyze material usage and waste patterns in traditional shoe manufacturing, allowing them to detect inefficiencies in material cutting and assembly processes. With all these insights they were able to design and create a lighter shoe with less environmental impact. The result is that Flyknit manufacturing process reduces material waste by about 60% compared to traditional methods.

Sustainability, goal setting and tracking

Setting and tracking sustainability goals is essential for continuous improvement. By gathering, collecting and measuring data from multiple sources, data analytics tools enable real time monitoring of the company’s environmental impact, allowing organizations to make fast and data driven decisions. Thus, this approach ensures accountability and facilitates continuous improvement in sustainability practices.

For example, Johnson & Johnson uses data analytics to track its progress towards sustainability goals. They use sensors and Internet of Things (IoT) devices to gather data on energy consumption and carbon emissions, feeding this data into analytical models that suggest optimization actions. With this approach they can adjust their strategies, adopt new technologies and refine their goals to ensure ongoing progress.

Environmental trends forecast

The environmental impact of the company should not only affect current processes, but should also take into account future predictions. Data analytics can assist with accurate forecasts by analyzing market trends and historical data. Thus, the optimization of ecological processes involves the application of data-based predictive models that can identify potential environmental problems before they occur, allowing organizations to take actions to ensure that certain risks do not occur in the future or at least minimize their impact.

IBM’s Environmental Intelligence Suite uses data analytics to forecast environmental trends, such as extreme weather events, and assess risks to operations and supply chains. On- demand historical weather data provides access to past weather data to inform predictive models. This allows the company to prepare and mitigate the impact of environmental changes, ensuring business continuity and reducing potential environmental harm.

Final conclusions

Data analytics has emerged as a crucial tool for companies to become more sustainable by providing actionable insights into energy usage, waste management, supply chain efficiency and more. By harnessing the power of data, organizations can gain valuable insights into their operations and market trends. And through predictive modeling and data-driven strategies, businesses can optimize resource allocation, reduce waste and contribute to environmental management.

It is true that integrating data analytics presents several challenges that can vary depending on the size and resources of a company. The complexity and the financial investment required to implement these tools are significant and these costs can be a major barrier for

most businesses. In addition, they require an advanced level of expertise to interpret data and translate it into actionable insights. Thus, the integration of data analytics into sustainability should be done as a strategic investment that aligns with business objectives, ensuring that the benefits justify the costs and complexities involved. This not only benefits the environment but also drives cost savings and improves their competitive advantage.

-J.C

In this previous post, I shared what I called The four cardinal points every senior software engineer must follow to progress in their career. One of these is Prioritization by Business Value, which invite you to take decisions driven by their impact on the business, simplicity and flexible are key to do it.

The More Flexible, the More Value

“A good architecture makes the system easy to change, in all the ways that it must change, by leaving options open.”

— Robert C. Martin, Clean Architecture

A flexible architecture allows you to deliver early and long-term business value more easily. One tip for your career as an Agile Software Architect is to prioritize decisions that enable you to delay defining specific architectural details. This includes delaying choices related to frameworks, protocols, databases, configurations, message brokers, and other external dependencies.

By delaying these decisions, you leave room for better choices when you have more information, avoiding premature commitments to technologies that could limit your solution’s adaptability in the future. Clean architecture, for example, helps you abstract these details and focus on core business logic without being tightly coupled to specific technologies early on.

The Simpler, the More Value

“Everything should be made as simple as possible, but not simpler.”

— Albert Einstein

As Agile Software Architects, our goal is to deliver value in the simplest way possible. Design decisions should focus on reducing unnecessary complexity, especially early in a project. For instance, instead of diving straight into complex architectures like microservices, consider simpler solutions that meet current needs and allow for easy evolution over time.

In my experience, I’ve seen projects that started with microservices from the beginning and failed because this architecture introduced unnecessary complexity. Managing service coordination, network overhead, and data consistency added more challenges than benefits at that stage. A simpler approach, like a monolithic architecture, would have saved time and resources, allowing the team to focus on delivering value earlier.

While microservices are great for scaling when necessary, starting simple and evolving as your system grows keeps your architecture flexible and easier to manage. By avoiding premature decisions, you prevent technical debt and ensure smoother progress.

Let me know your thoughts about this.

-Carlos.G

In our previous article, we emphasized that software architects working within an Agile framework need to be receptive to ongoing changes and continuous feedback. This necessity arises primarily from one central focus – the delivery of continuous value.

Delivering Value with Agile

Adopting an Agile approach means our primary objective is to deliver continuous value, which isn’t always in the form of a completed feature. For instance, suppose there’s uncertainty about the feasibility of a task. In that case, we can formulate and test a hypothesis to gain insights into the issue. This investigative process delivers value to stakeholders. The insights derived from the research inform us whether a task is achievable or not, thereby enabling us to make data-driven decisions and take informed actions.

Initiation and Conclusion of the Design

In order to answer the question of when to initiate and conclude our design phase for maximizing value delivery, let’s revisit the Software Development Life Cycle (SDLC). Typically, it’s segmented into six primary phases: planning, requirement analysis, design, development, testing, deployment, and maintenance. Within the Agile framework, we cycle through these stages repeatedly since we incrementally implement solutions. Consequently, we are continually engaged in the design process as we add new features. The design phase concludes when the solution is either complete (if such a point exists) or ceases to add value.

Divide and conquer

“The secret of getting ahead is getting started. The secret of getting started is breaking your complex overwhelming tasks into small manageable tasks, and then starting on the first one.”

Mark Twain

Drawing from my experience, customer-expressed problems often carry an element of ambiguity, which may be attributed to the scale of the issue. Frequently, the identified problem is expansive. In such instances, it’s beneficial to break it down into smaller, more manageable sub-problems. This approach not only simplifies the resolution process but also ensures accuracy in our efforts. Moreover, it allows us to adopt an incremental problem-solving strategy, enabling us to gain feedback with each resolved sub-problem.

Designing involves not only resolving problems but often identifying them as well. My recommendation is to first accurately pinpoint the problem, which necessitates validating the identified requirements. Once done, select the crucial requirements that the design solution will address. These chosen requirements will guide your architectural decisions effectively. The elicited requirements should be chosen based on the scope of the current iteration, meaning different requirements will be addressed in each iteration. In upcoming posts, we will delve deeper into this process of identifying and selecting the correct architectural drivers for each iteration.

Join the Conversation: Share, Learn, and Grow

As we continue to explore the nuances of software design within an Agile framework, your insights and experiences can enrich the conversation. I invite you to share your thoughts, ideas, or questions in the comments section below. Have you faced similar challenges in your projects? How have you addressed them? What strategies have worked for you? I look forward to engaging with your responses and learning from your experiences.

Remember, a shared problem often leads to a shared solution. Together, we can continue to refine our understanding and enhance our practices. Let’s learn and grow together in this journey of continuous improvement.

Stay tuned for the upcoming posts where we’ll dive deeper into the process of identifying and choosing the correct architectural drivers for each iteration. Thank you for reading and contributing to this discussion.

-Carlos.G